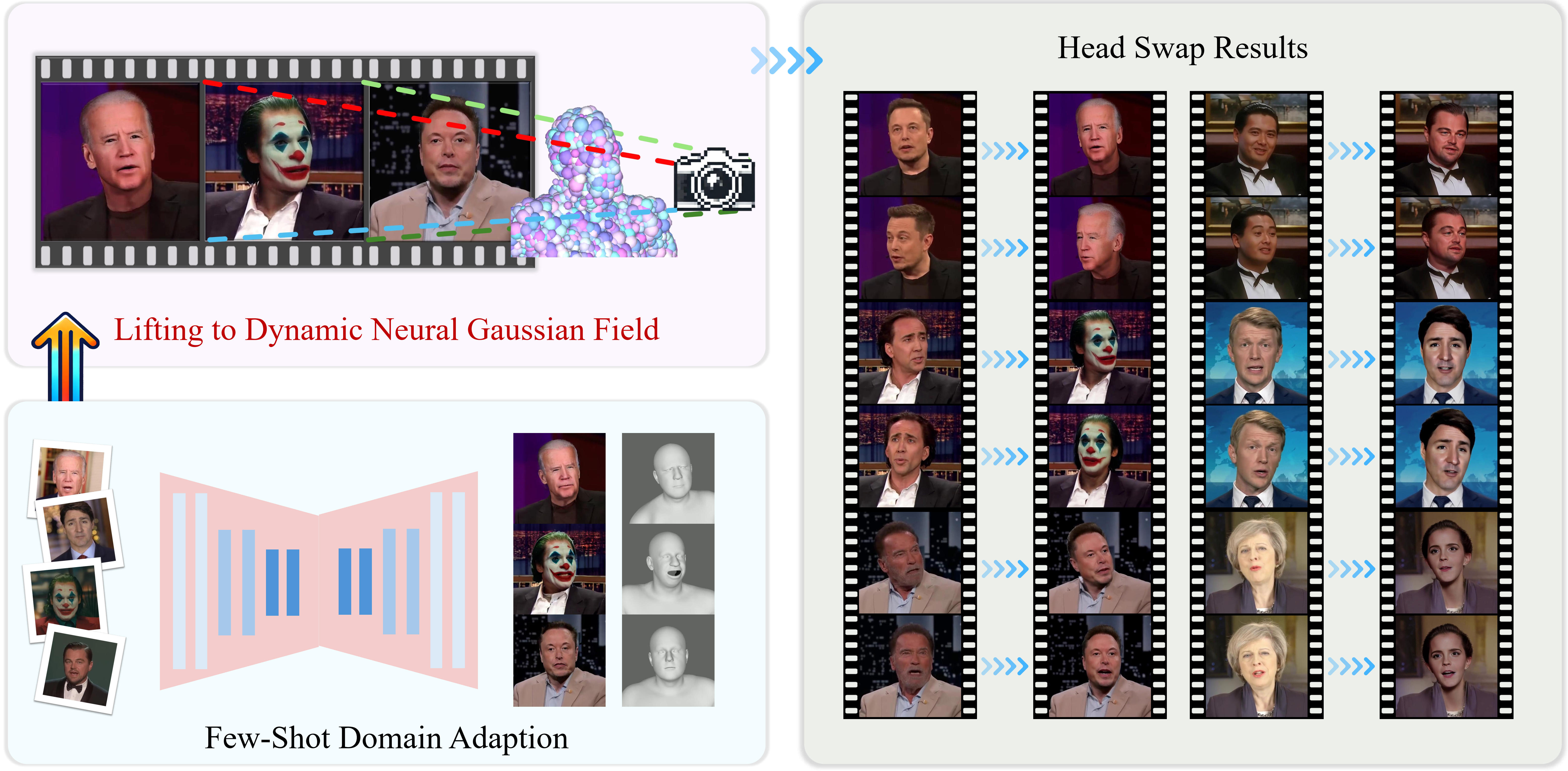

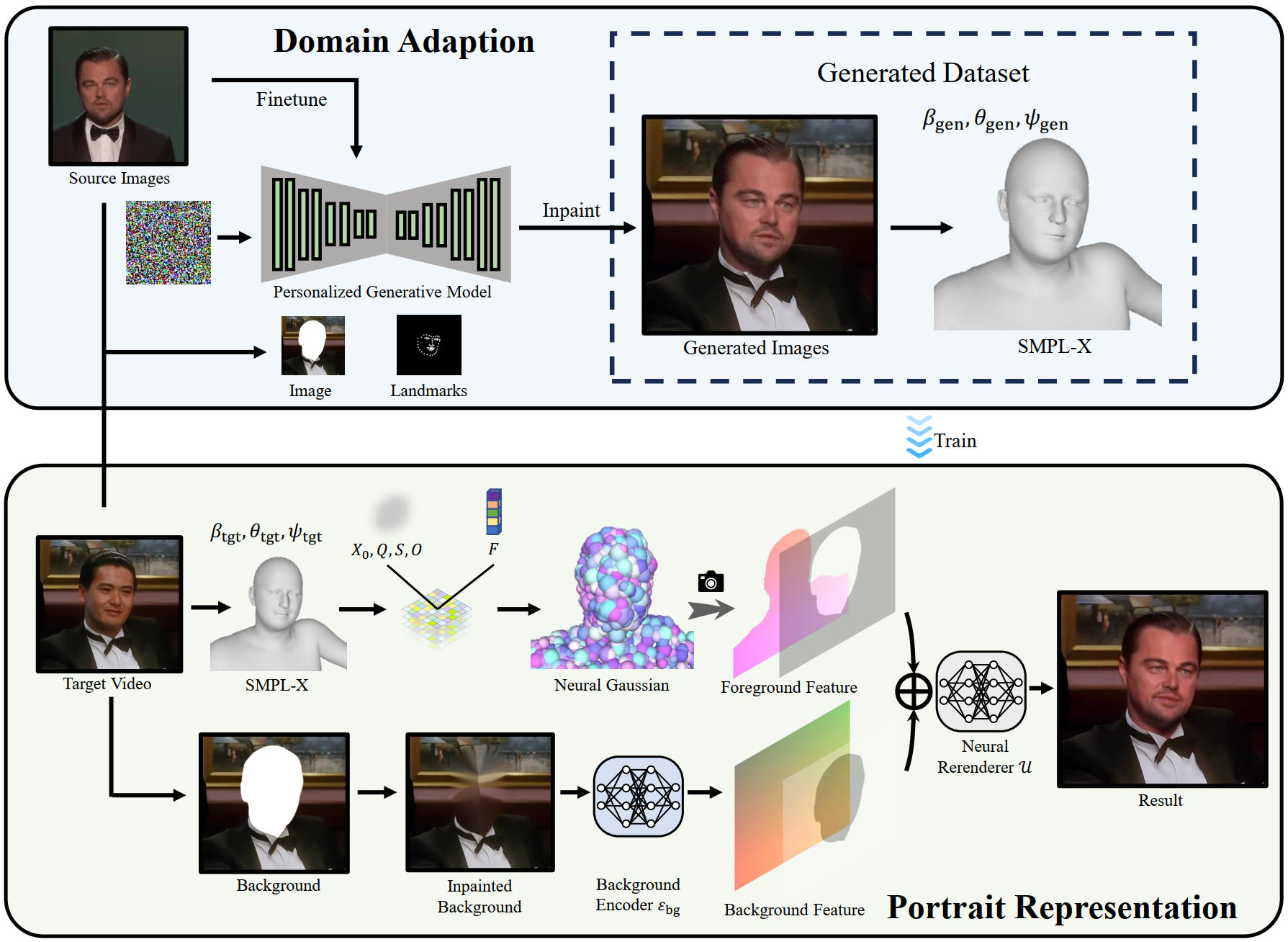

We present GSwap, a novel consistent and realistic video head-swapping system empowered by dynamic neural Gaussian portrait priors, which significantly advances the state of the art in face and head replacement. Unlike previous methods that rely primarily on 2D generative models or 3D Morphable Face Models (3DMM), our approach overcomes their inherent limitations, including poor 3D consistency, unnatural facial expressions, and restricted synthesis quality. Moreover, existing techniques struggle with full head-swapping tasks due to insufficient holistic head modeling and ineffective background blending, often resulting in visible artifacts and misalignments. To address these challenges, GSwap introduces an intrinsic 3D Gaussian feature field embedded within a full-body SMPL-X surface, effectively elevating 2D portrait videos into a dynamic neural Gaussian field. This innovation ensures high-fidelity, 3D-consistent portrait rendering while preserving natural head-torso relationships and seamless motion dynamics. To facilitate training, we adapt a pretrained 2D portrait generative model to the source head domain using only a few reference images, enabling efficient domain adaptation. Furthermore, we propose a neural re-rendering strategy that harmoniously integrates the synthesized foreground with the original background, eliminating blending artifacts and enhancing realism. Extensive experiments demonstrate that GSwap surpasses existing methods in multiple aspects, including visual quality, temporal coherence, identity preservation, and 3D consistency.

In this paper, we have introduced the video head swap system, GSwap. We adapted a pre-trained diffusion model to the source head domain and used it for inpainting on the target video to create a training dataset. The diffusion model's capability ensures high identity similarity with the source images. Additionally, we integrated a 3D Gaussian field onto the surface of SMPL-X to enhance the quality and ensure temporal consistency of the results. We also introduce a neural rerendering module that seamlessly blends the foreground head region with the background.

We compare our proposed GSwap with the state-of-the-art head swapping model Heser and face swapping models DeepLiveCam, DiffSwap, InfoSwap, BlendFace, FaceAdapter, LivePortrait.

We evaluate the retracking module, neural rerendering module, and the number of input images in our algorithm.

We show some other results here.

If you find GSwap useful for your work please cite:

@article{zhou2026gswap,

title={GSwap: Realistic Head Swapping with Dynamic Neural Gaussian Field},

author={Zhou, Jingtao and Gao, Xuan and Liu, Dongyu and Hou, Junhui and Guo, Yudong and Zhang, Juyong},

journal={IEEE Transactions on Visualization and Computer Graphics},

year={2026},

note={Published online March 4, 2026},

doi={10.1109/TVCG.2026.3670403}

}